Proving Twitter Intentionally Allow Spambots

No, the solution isn't 'add more facial recognition'

And why do they?

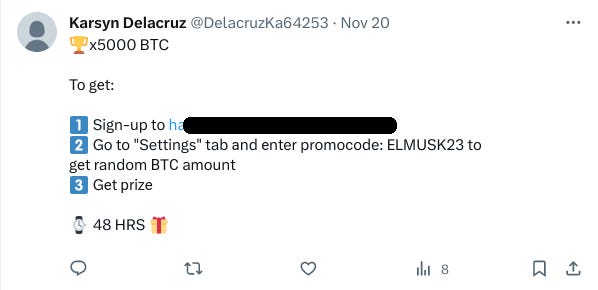

If you swing by a list The Daily Beagle has created, called “Spam Bot Examples”, it contains a list of about 35 spambots (nearly all of whom have the same copy-paste BTC winnings scam message). Here’s one such example:

35 might not seem impressive, but they were manually uncovered in a few minutes, and there’s many more.

These bots span many hundreds of accounts who operate in interconnected networks. Usually they’ll have between 1 to 5 followers (typically all bots), however some have 10 to 20:

These bulk follower accounts appear to be Command and Control (C&C) nodes, which spam Twitter logo images with ‘@’ tags to signal what targets to attack:

These logos don’t flag up for spam as the images contain randomly changed pixels (some examples highlighted wth red circles) which tricks any crude algorithm into thinking the image is distinct:

The bots in the C&C node’s follower list are painfully obvious, having no profile picture:

The ones with profile pictures are typically human trafficking/sex trafficking bot accounts. Take “ann”, for example, embedded in the profile bio is a URL to an obviously dubious link questionably called “bombcams”:

These links on the human trafficking accounts are randomised, likely to evade crudely implemented blacklists:

It isn’t entirely clear if they also run malware on the websites, however the probability is quite high given the unsculptious behaviours.

Not all accounts followed are bots. For example, in this C&C bot’s follower list is what appears to be a real account (contact details removed):

It is likely the botnet additionally offers services to artificially inflate follower counts, thus game Twitter engagement.

Problematic when you consider Twitter’s revenue is driven by advertising metrics. Likely Twitter intentionally allows bots to operate with impunity to inflate the interaction figures.

Remember, they have a suppression algorithm that blackballs dissenting voices. It does not appear to be used against spambots. An algorithm smart enough to infer intentions in posts and topics, but not smart enough to detect a blatantly obvious, generic scam? Bollocks.

These types of bots are overwhelmingly easy to stop and are the lowest hanging fruit. Any half-decent programmer with a mediocre skillset ought to be capable.

Proving They’re Easy To Beat

If one swings by Gab.com, a small scale social media outlet, with, we believe roughly 6 active employees, you will find Gab has practically no conventional spambots as far as the eye can see.

This is despite the fact they got attacked by over 50,000 bots at one point:

Having used the website, The Daily Beagle can testify it was indeed at one point completely flooded with the worst kind of spambots, more akin to the low quality bots seen above. No, it wasn’t solved by implementing a Shin Bet connected facial recognition agency.

So how did a small scale social media company beat spambots whilst billionaire tech mastermind genius ‘mad mRNA Elon Mask’ and Twitter’s 1k+ programmer staff can’t?

HunterKiller Bot Software

Gab makes use of an anti-bot designs based on a concept known as ‘HunterKiller’, which is a bot-like program or algorithm that is designed specifically to hunt and kill bots. It assesses a profile and scores how bot-like said profile is.

Think of it like a spider-bot that moves from profile to profile mapping networks to poach closely associated botnets and sock accounts. Ideally, such software is triggered when someone files a report.

So things like ‘missing profile picture’, unverified URLs in the bio, identifying or associating with other accounts with high bot scores, etc all contribute. Accounts that flag as likely being bots trigger a deeper investigation of their contacts (followers and followings). Scoring is tweaked for specific combination of words (so for example, the words ‘win’, ‘BTC’ ‘prize’ together would add to the bot score).

Such a system can quickly unearth entire botnets by spidering their bot contacts. Many at-home programmers already do this when mapping Twitter networks; the only difference is Twitter doesn’t use such knowledge to squash botnets.

This is on top of a battery of bot swarm mitigation measures Gab uses: accounts must ‘earn’ interaction points where for the first 24 hours they are limited in how much they can do or post. A trial period, if you will.

In normal anti-bot software, as they do non-bot things, such as replying, quoting, liking, uploading images, posting URLs, using DMs (read: interact with the service normally), their interaction points goes up, until they gain the maximum.

That said, even with that, they still have daily limits of how many posters who can be followed, and how quickly they can post in a given timeframe (usually 30 seconds). Gab posts being longer allows more thoughtful discussion, rather than kneejerk soundbites easily taken out of context (read: people are less bothered by the 30 second cap).

Duplicate messages are prohibited on an account-level basis (not simply a thread level, which is easily evaded); so once you publish something, you cannot republish it using the exact same wording.

Accounts that trigger a bot-like suspicion can be hit with random captchas (it is not clear if this is still a process at Gab). This prevents automated, streamlined solutions (E.G. solving the captcha manually upfront and then leaving it to run, which plagues places like 4chan). Failure to solve the captcha increases the bot score; successfully solving it decreases it.

Delay Is The Game

This process is intentionally slow as bot operators cannot easily make new bot accounts to quickly replace the ones that have been banned. It makes spam less effective and forces bot designs to become intentionally more complex; making it harder for low skilled attackers to design working bots, and increases the failure rate of bots.

For a bot operators, each bot has an associated cost to run. Think of it like separate computer programs. So, for you, 30 seconds isn’t a problem, but for a bot operator, 30 seconds for every single bot running is a huge slowdown. Multiply it by hundreds or thousands.

Gab does this without shadowbanning accounts. Just making the terrain naturally harder for mass automation to navigate. Remember: this is just what 6 employees at a small social media company built.

Why Does Twitter Permit Low Quality Bots?

Why? Well, besides bloating metrics, one possibility is to enable propagandists to automate copy-paste spam post to alter discourse, as evidenced here by Angelina 11:11.

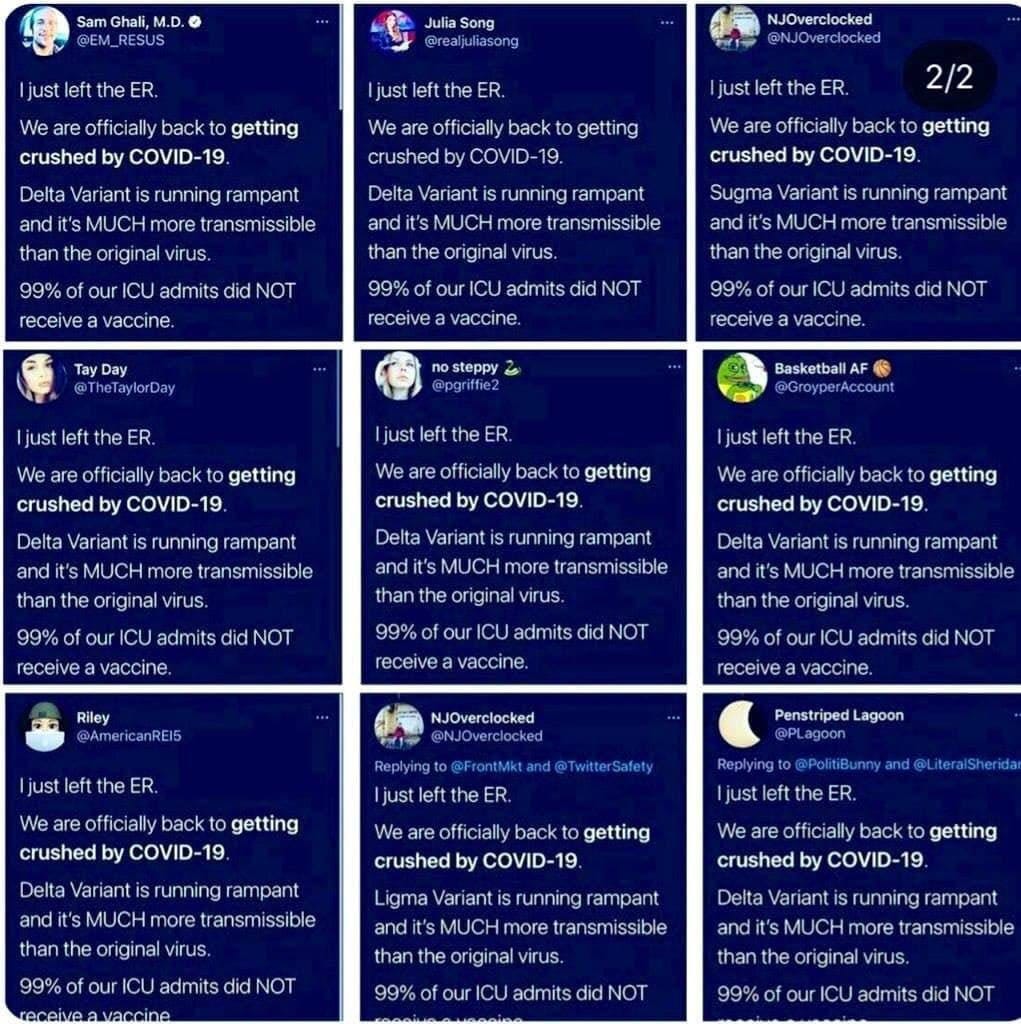

In this case, it is regarding the poison shots, where masses of fake accounts falsely claim they ‘just left the ER’ and fraudulently claim ‘99% of our ICU admits did not receive a vaccine’:

There have been similar incidences with other political outrage events. These bot accounts copy-paste spam the same calls for lockdowns:

Notice these bots have more sophistication than the blatantly obvious BTC scammers; they have profile pictures, more plausible sounding usernames, and more naturalistic seeming post interactions, including retweets and likes (check out NJOverlocked’s profile, for example).

Twitter doesn’t flush out the low hanging fruit because it would draw attention to the fact there’s higher hanging fruit they could also be taking down, namely, the government sponsored, automated propaganda. You know, like US military shills and the like.

Instead, Elon Mask just handwaves, shrugs his shoulders and says ‘we’ve tried nothing and we’re all out of ideas’.

Oh no, faceless bots!

Quick, into the pod citizen, and don your brain microchips and face scanners!

For the good of your health, of course!

Related Articles To Read

Found this informative? Receive more for free!

Raise Awareness?

What do you think, dear reader? Let us know in the comments.

I actually probably look like a bot as I block most followers (so many bots), have no picture of byline and am new. But The Conservative Treehouse has a pretty good theory that when Elon bought twitter he didn’t realize that the feds already had official back door access and they still do.If Elon blocks them, they will go for all out war, I think it is a difficult position for him to be in.